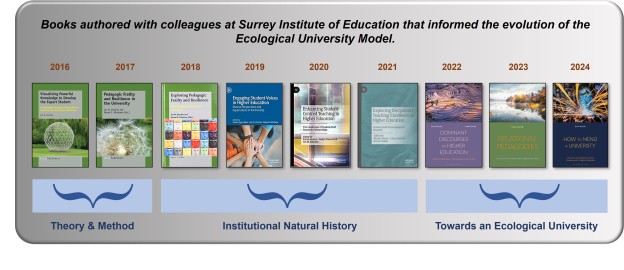

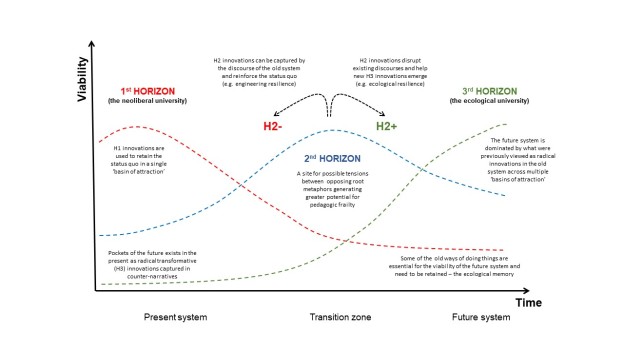

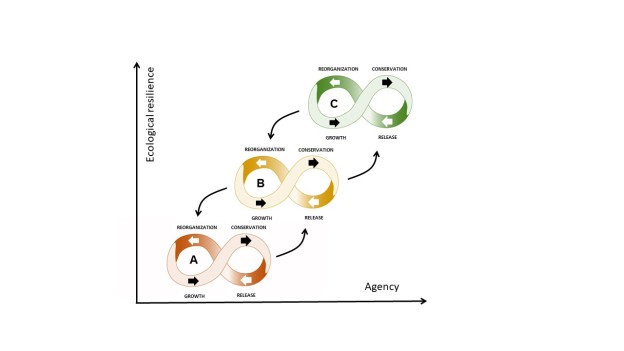

Thanks to all those colleagues who have written or contributed to the books from Surrey Institute of Education published over the past ten years or so, that have informed the evolution of the Ecological University Model that is explored in ‘How to Mend a University’. I couldn’t have got there without you.